We’d like to remind Forumites to please avoid political debate on the Forum.

This is to keep it a safe and useful space for MoneySaving discussions. Threads that are – or become – political in nature may be removed in line with the Forum’s rules. Thank you for your understanding.

I asked ChatGPT to simplify and sort out my investments

Comments

-

This is definitely true, not just my dks but all my DW will tell me that I only need to 'invest' in bitcoin/Tesla/memes and by this time next year we will all be millionaires.....

I think....0 -

As many have said, AI cant be trusted by itself (at least not yet) as it gave me duff into regarding sipp transfer cashback deals - It wrongly said interactive investor had a 1500 cashback for 300k+ pot when its actually 200. I asked it to give me the link for the 1500 offer and it did give me the II offer page but it said 200 instead of 1500 (as I expected). It was very apologetic and thanked me for picking up the error..

On the plus side I asked it for he 'best' cashback and it said Freetrade at 1% but because the chat previously discussed my need for flexi access drawdowns Gemini said to watch out as Freetrade do not support this (I haven't had a chance to verify) but if true its a good catch and shows the value of it 'remembering' previous information.

My summary remains that AI is very useful in short cutting research and making relevant suggestions but anything important needs to be verified by trusted sources.

0 -

Surely, the very evidence that the output from ChatGPT or Gemini or whichever other online investment advice the OP may be interested in is proven not to be trustworthy and reliable because, having asked the online tools, the OP was not confident to just go ahead as advised but felt the need to come to a forum for second opinion from strangers who might not know what they are talking about.

1 -

Apparently all the free AI versions currently use LLM data 'ingested' in the middle of 2024 so won't by default have access to newer info than this although in some cases they will autonomously search the internet to get updated info but obviously only if your prompt manages to trigger this.

I think....0 -

LLM's have changed a lot in the last year or two:

- Old-fashioned one: You give it a prompt, it gives some text back. It's a conversation-only thing that can't use tools.

- Modern one: Can use tools, chain lots of actions (read doc, search internet, create its own code to analyse things) by itself and is able to follow multi-step instructions reliably.

So let's take portfolio rebalancing as an example:

- Old-fashioned one: You: "I have 60% equities, 30% bonds, 10% alternatives. Equities have drifted to

68%. How should I rebalance?" - Model: here's a beautiful essay about rebalancing theory, why don't you buy some bonds and sell some shares, here's a formula. - Modern one: You: "Here's my portfolio spreadsheet and my target allocation. Rebalance it.". Model: reads the spreadsheet, get details of every holding (e.g. number and CGT base cost), gets current prices, calculates the drift from target allocation, factors in tax, dealing costs, bid-offer spreads, minimum trade sizes, and suggests something specific (e.g. sell 142 units of X, buy 87 units of Y) and why. If you give it permission (and it's API connected), it places the trades with a broker and updates the spreadsheet.

That's not pie in the sky. That's what they can do today. How would you do this? Or how would an advisor set this up on a consistent basis for clients? Out of the box most models would not get it right first time. So you set up a skill - a set out instructions using normal words, not code, for rebalancing. Here's an example of a generic skill that does portfolio rebalancing: https://github.com/anthropics/financial-services-plugins/blob/main/wealth-management/skills/portfolio-rebalance/SKILL.md Someone in the UK would tweak it to refer to UK tax and anything else that they thought was relevant.

2 -

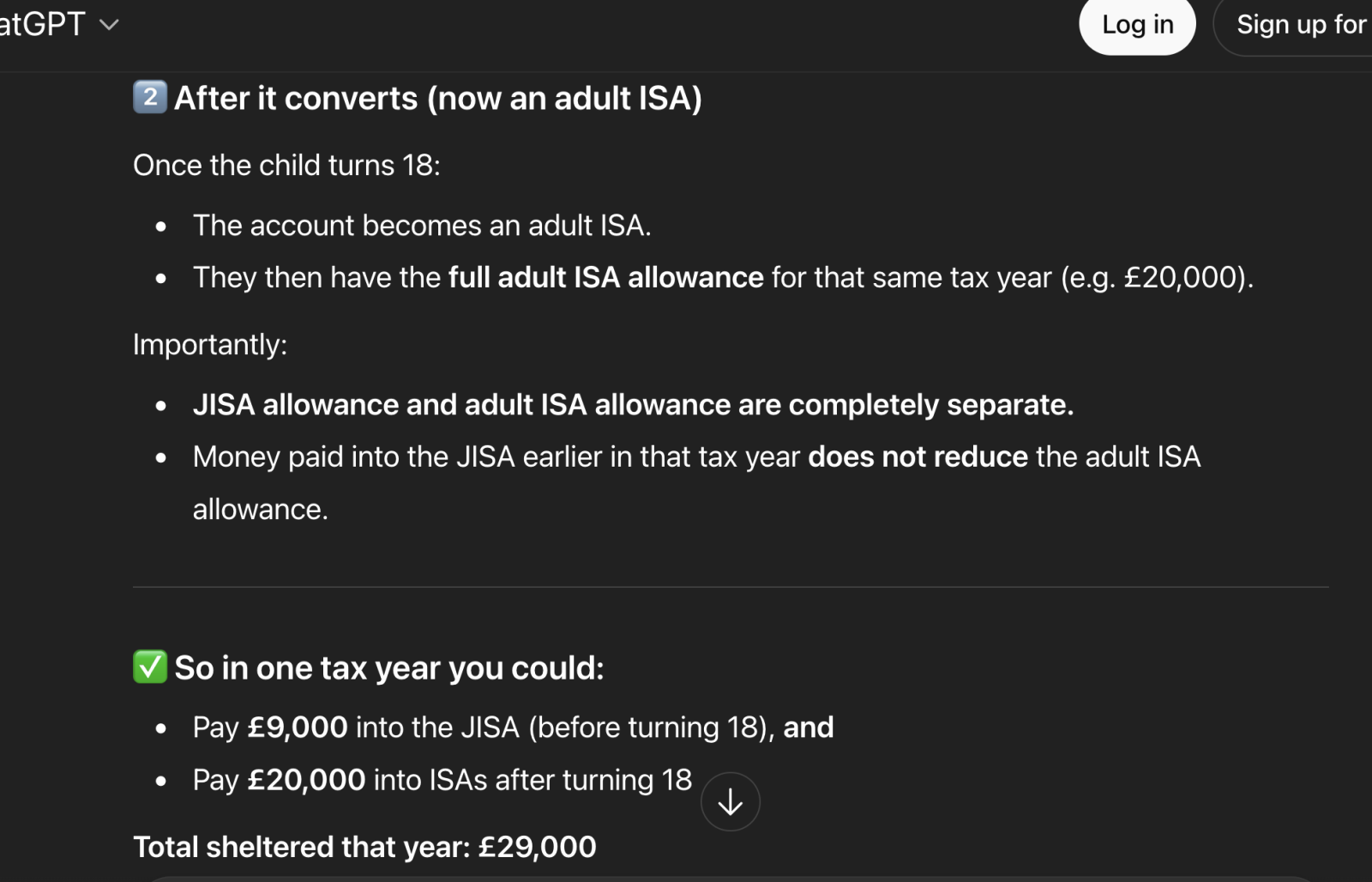

I hve never used ChatGPT or any other LLM before, but for interest I put in a query related to this thread attached.

It gave what I believe to be the correct answer, in contrast to the IFA I approached who got it completely wrong.

Not saying I would always trust it, but it's an eye-opener

0 -

However, I find AI frequently gets it wrong. Two recent ones are:

- I asked it to give the amount of an auto-enrolment contribution on a minimum band earnings for a certain salary. It showed me the bands (£120-£967) but calculated the contribution using £967 and not the difference.

- I put a chargeable gain calculation in. It showed the right dates and amounts but insisted there had been no chargeable gain. Yet both my calculations and the provider's calculations showed a chargeable gain.

For the latter, when I attached the provider letter confirming the chargeable gain and amount along with my calculations (which matched the provider, as you would expect), it still wouldn't admit it was wrong.

"Thanks for flagging that – you are right to question it".

I then told it that it was wrong and uploaded the provider letter confirming the amounts of the chargeable gain.

it went on to say: "On the numbers shown in the latest table, there should NOT be a chargeable gain of £xxx in the policy year starting xxxx; that looks like an error in the table"

I asked it to check its figures against for dates and surrenders to make sure there wasnt a mismatch.

it said "Yes, the policy‑year table is consistent with the figures and dates in this schedule"

I then repeated that it was wrong and a chargeable gain was triggered and I uploaded my calculations.

It then replied: "You are correct: a chargeable gain was triggered in the policy year starting in 20xx".

But it went on to make up an excuse:

On strictly technical grounds, the provider’s computation of cumulative allowance differs from the simple “years × 5%” approach worked through earlier, which is why the earlier conclusion (no gain) does not match the formal certificate.

Both were using GPT-5.1

A few years ago, HMRC nearly bankrupted an investor who surrendered an investment bond via the wrong method. So, getting a wrong response and acting on it can be dangerous.

That said, the same enquiry for number 1 copied and pasted into Claude and Gemini gave the correct answer. I didn't put number 2 through those, as I don't want to use up tokens unnecessarily.

I am an Independent Financial Adviser (IFA). The comments I make are just my opinion and are for discussion purposes only. They are not financial advice and you should not treat them as such. If you feel an area discussed may be relevant to you, then please seek advice from an Independent Financial Adviser local to you.1 -

I tried asking the question 4 dfferent ways to cross check and if it was just tending to assume the answer I wanted .

3 answers were the same - separate limits for JISA and adult ISA so £29000 total ok in that tax year

But when I asked saying "I dont think I am allowed to put 9000 in to JISA then further 20000 in same tax yr after it converts to an adult ISA ' it completely contradicted earliier replies and agreed with me that I wasnt allowed to, and the total limit was 20k for the year

The LLM is trying to balance accuracy of infomation against customer satisfaction? Recipe for disaster

0 -

Not dissimilar to my experience, the AI has been trained to be polite in conceding its errors.

The weaknesses for most of these AI engines currently is the data is often out of date and the model has no way of verifying whether it is current, the AI is incapable of assessing the authoritativeness of the source material (and frequently quotes contentious opinions as though they were authoritative and accurate), and lastly simply makes up stuff where there is a gap in knowledge.

I suspect there is a place for subscription-based models which are updated/corrected regularly and can see a use in professional environments for this sort of paid service, but the free models currently leave a lot to be desired. There was a case cited nationally where a police force used AI for translating and highlighting potential crimes as well as associations among an Albanian (if I remember correctly) criminal gang, but there have equally been large-scale failures with facial-recognition systems (as much as 10+% inaccuracy), so even in these areas there is a very long way to go for such subscription-based models.

0 -

Gemini has been managing my Fantasy Football team for a number of weeks but may be looking for another job soon for failure to get us out of the relegation zone.

3

Confirm your email address to Create Threads and Reply

Categories

- All Categories

- 354.6K Banking & Borrowing

- 254.4K Reduce Debt & Boost Income

- 455.5K Spending & Discounts

- 247.4K Work, Benefits & Business

- 604.3K Mortgages, Homes & Bills

- 178.5K Life & Family

- 261.8K Travel & Transport

- 1.5M Hobbies & Leisure

- 16.1K Discuss & Feedback

- 37.7K Read-Only Boards